B-Calm

|

You can't train what you can't measure.

High-stakes decisions happen fast and leave no trace. After-action reviews rely on memory and subjective accounts. There's no structured way to extract what actually drove a decision. The stress cues, the crowd dynamics, the split-second delegation patterns all go unmeasured. Without that data, training stays anecdotal and trust stays unquantified.

B-CALM sees what cameras miss.

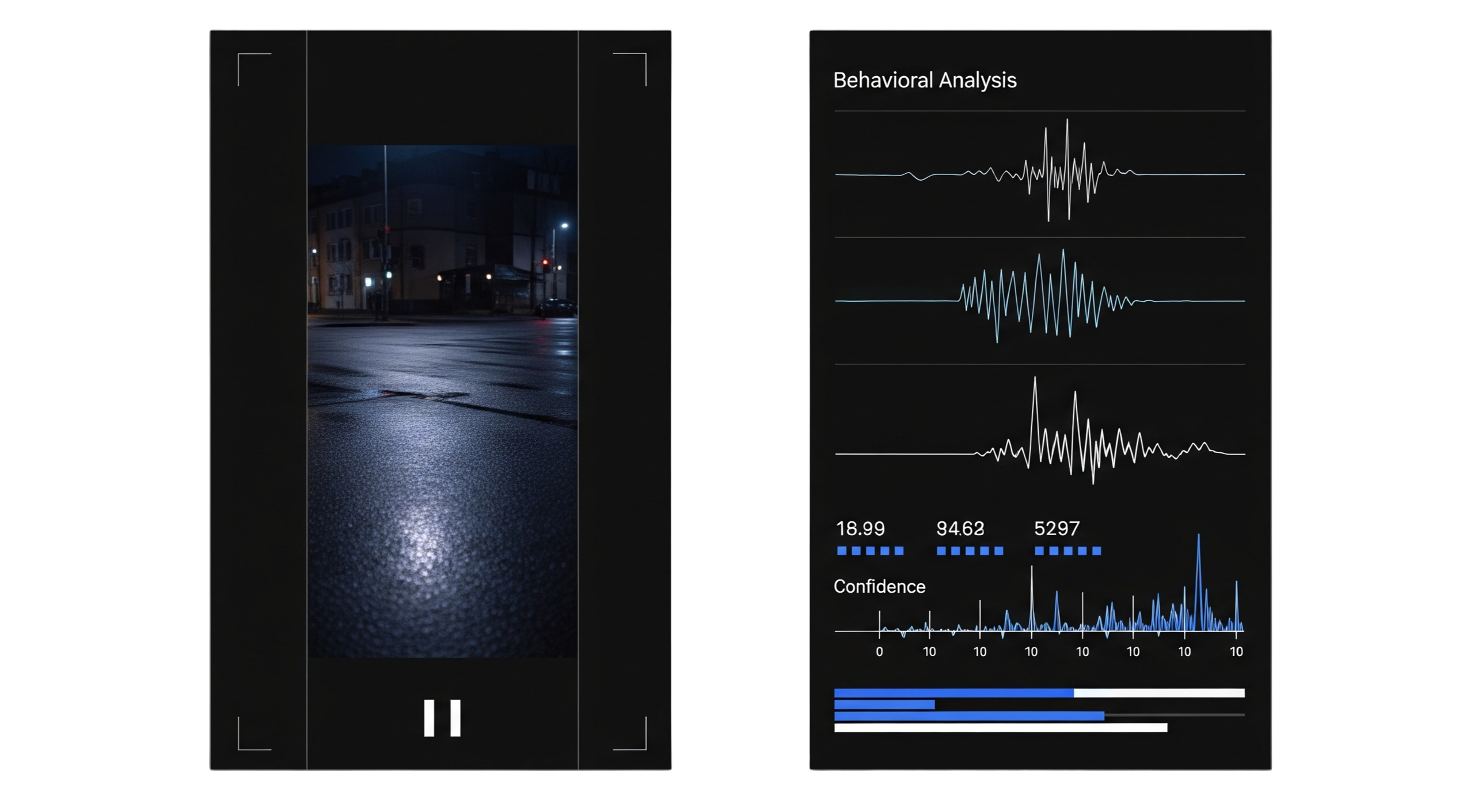

Behavioral-Context Architecture for Latent Modeling. It turns unstructured video, audio, and text into measurable decision-maker attributes.

Built to see what humans miss.

Multimodal Fusion

Integrates voice stress, crowd size, timing, and visual cues to predict and explain decision styles. It goes beyond what any single-source model can do.

KDMA Identification

Extracts latent psychological attributes like risk appetite and satisficing vs. maximizing from unstructured data to anticipate human choices under pressure.

Traceable Transparency

Every prediction links back to replayable frames, transcript spans, and audio with feature attributions. Full auditability, no black boxes.

Structured Analytics

Converts raw footage into quantifiable markers: escalation triggers, outcome classifications, and delegation patterns. All searchable and comparable.